Configure environments

Back to home

On this page

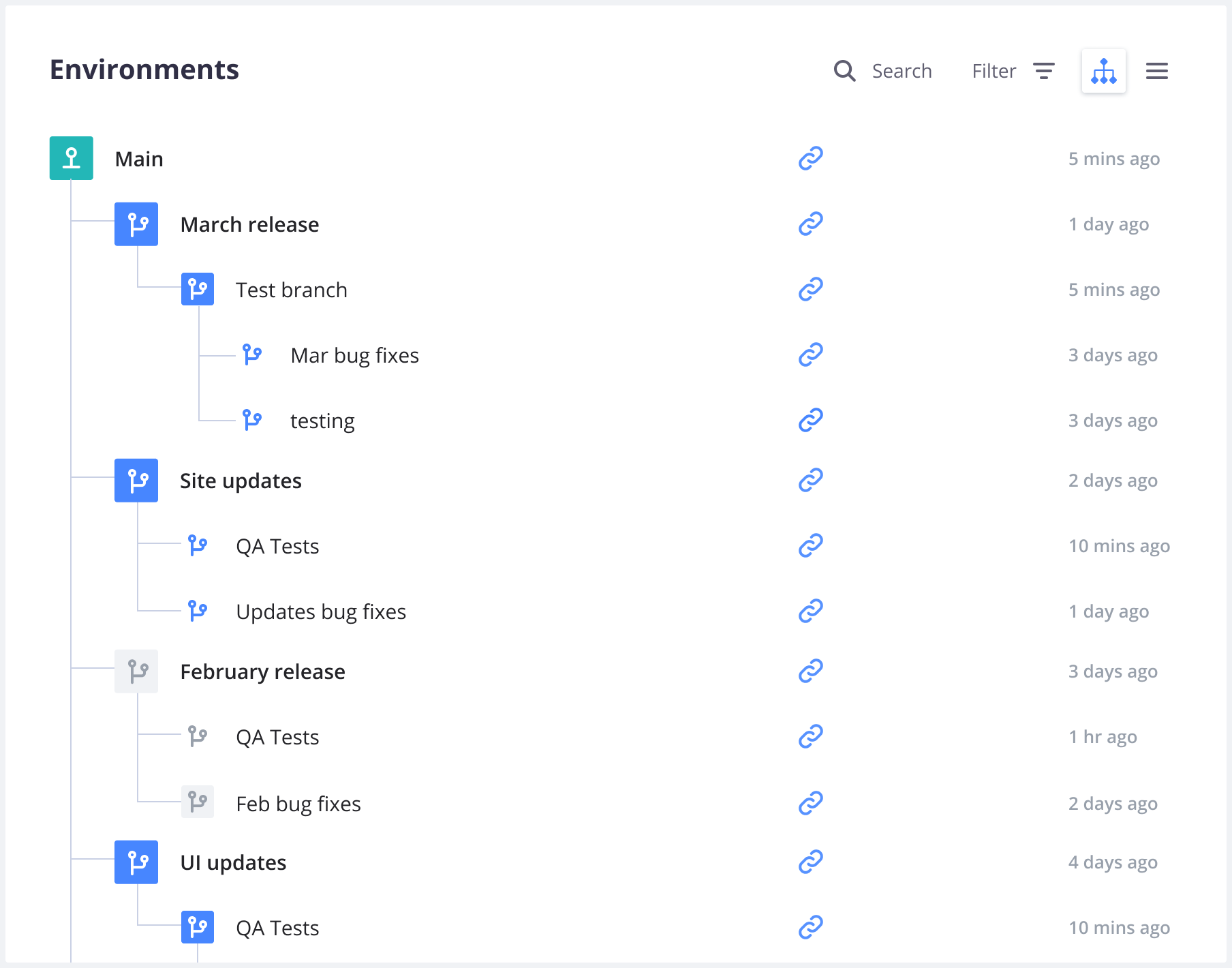

From your project’s main page in the Console, you can see all your environments as a list or a project tree:

In this overview, the names of inactive environments are lighter. Selecting an environment allows you to see details about it, such as its activity feed, services, metrics, and backups.

Activity Feed

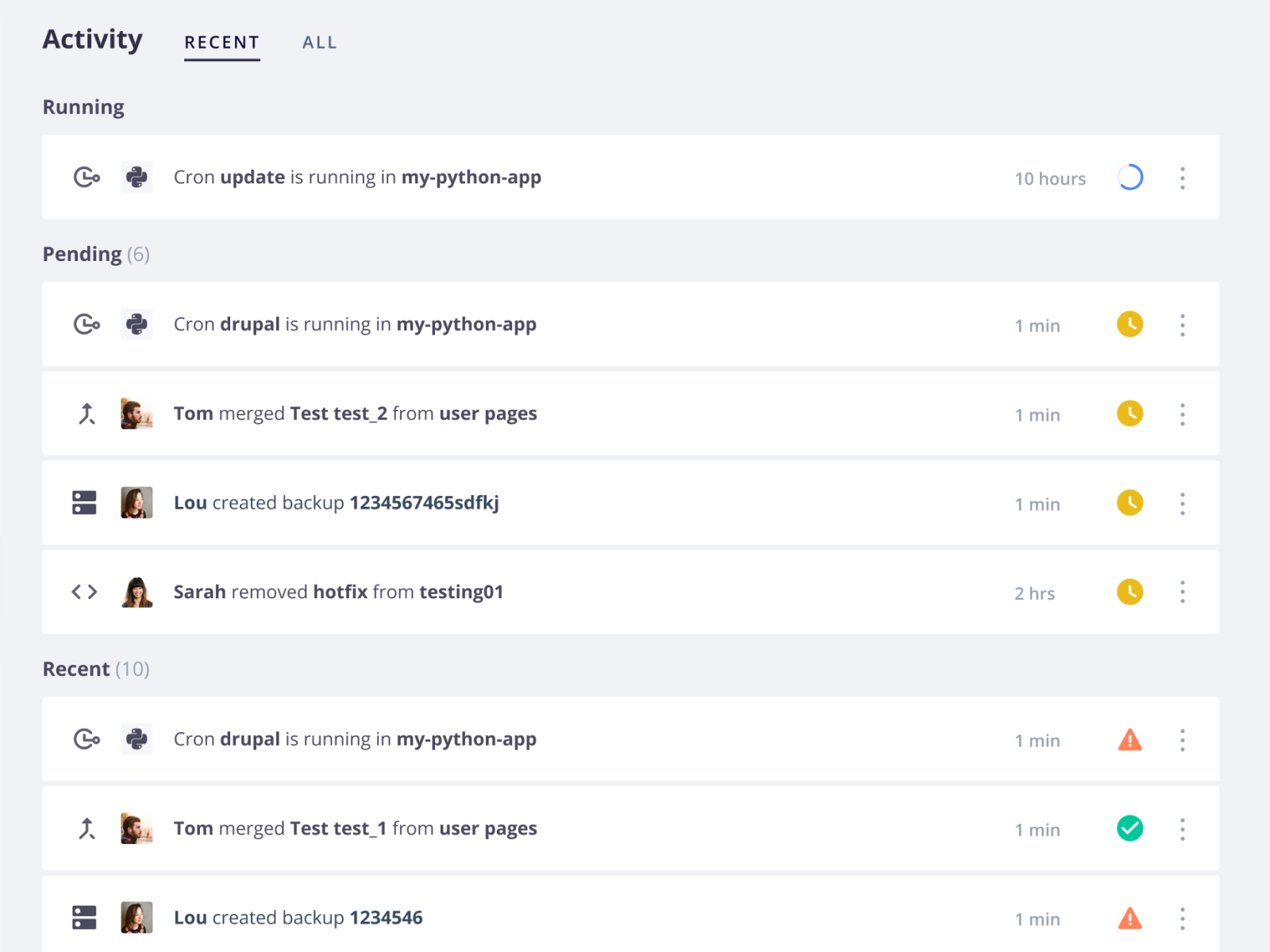

When you access an environment in the Console, you can see its activity feed. This allows you to check which activities have happened or are currently happening on the selected environment:

You can filter activities by type (such as merge, sync, or redeploy).

Actions on environments

Each environment offers ways to keep environments up to date with one another:

- Branch to create a new child environment.

- Merge to copy the current environment into its parent.

- Sync to copy changes from its parent environment into the current environment.

There are also additional options:

- Settings to configure the environment.

- More to get more options.

- URLs to access the deployed environment from the web.

- SSH to access your project using SSH.

- Code

-

CLI for the command to get your project set up locally with the Platform.sh CLI.

-

Git for the command to clone the codebase via Git.

If you’re using Platform.sh as your primary remote repository, the command clones from the project. If you have set up an external integration, the command clones directly from the integrated remote repository.

If the project uses an external integration to a repository that you haven’t been given access to, you can’t clone until your access has been updated. See how to troubleshoot source integrations.

-

Environment URL

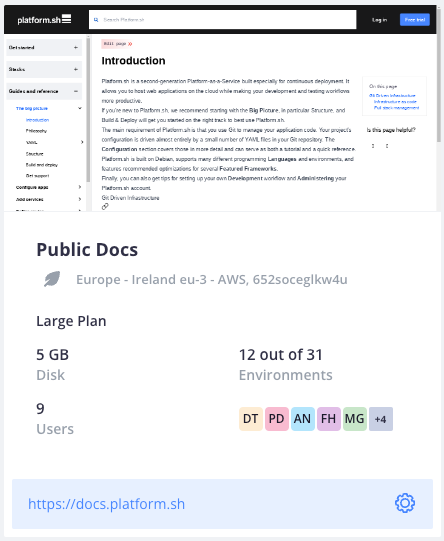

When you access an environment in the Console, you can view its URL:

While the environment is loading in the Console, a Waiting for URL... message is displayed instead of the URL.

If this message isn’t updated once your default environment’s information is loaded,

follow these steps:

- Check that you have defined routes for your default environment.

- Verify that your application, services, and routes configurations are correct.

- Check that your default environment is active.

Environment settings

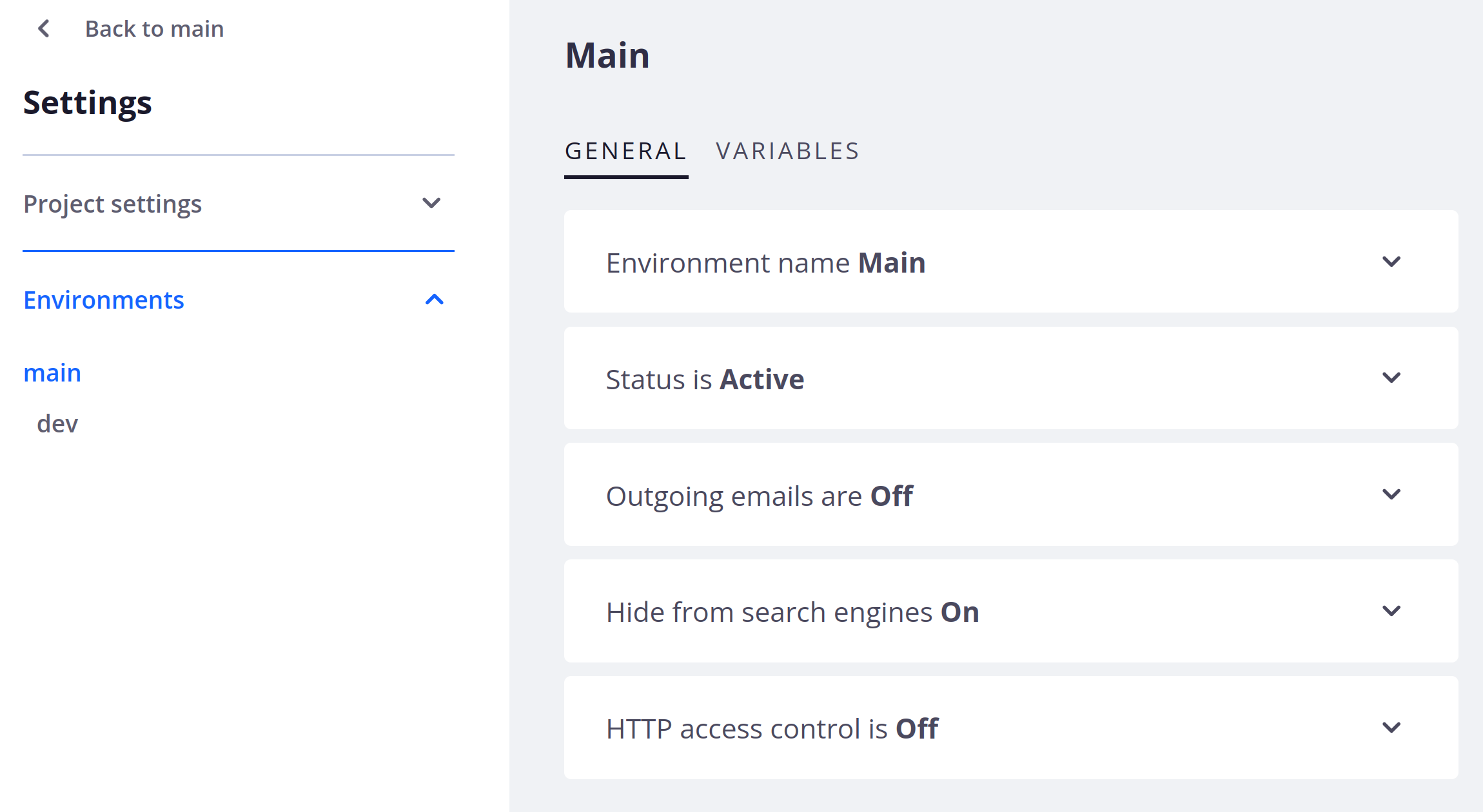

To access the settings of an environment, click Settings within that environment.

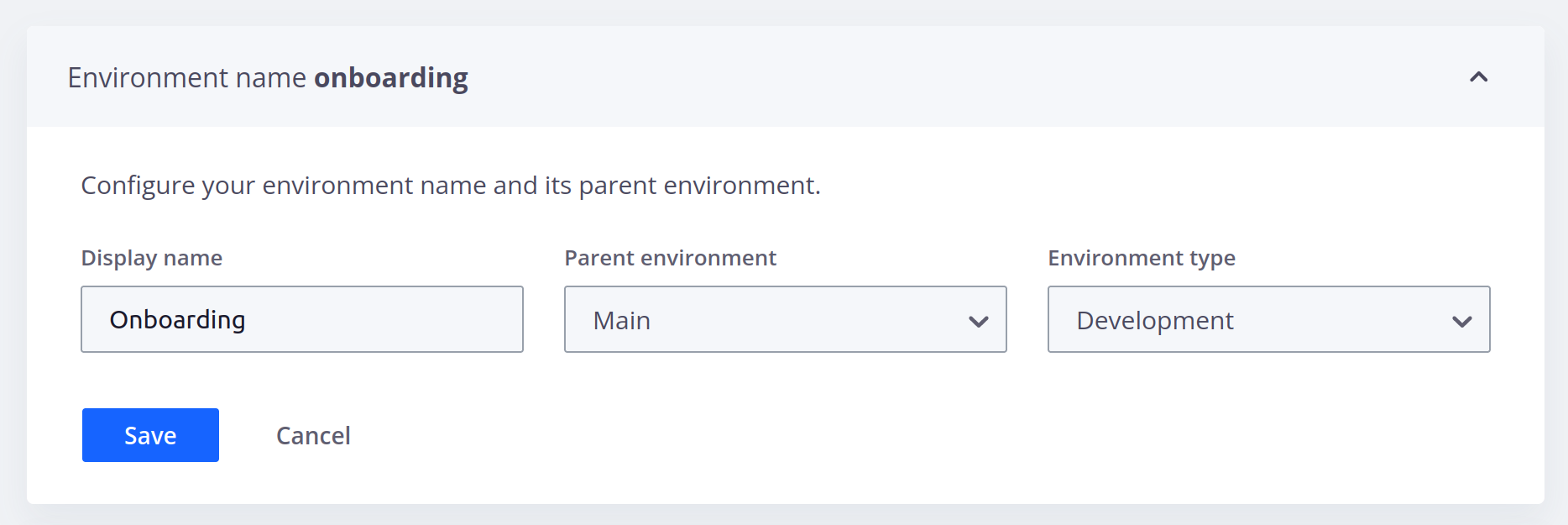

Environment name

Under Environment name, you can edit the name and type of your environment and view its parent environment:

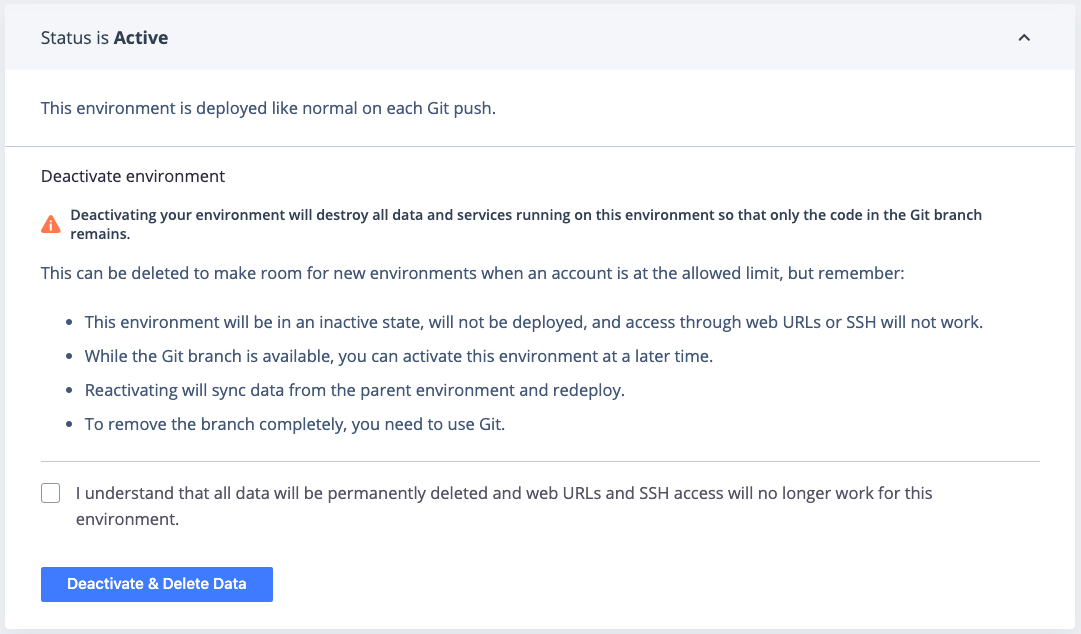

Status

Under Status, you can check whether or not your environment is active.

For preview environments, you can change their status.

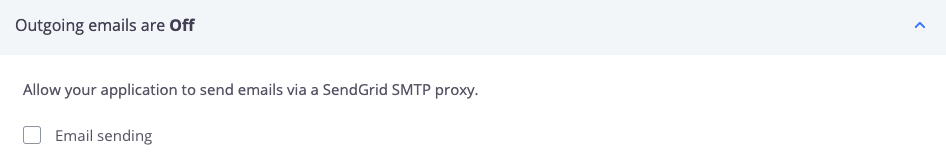

Outgoing emails

Under Outgoing emails, you can allow your environment to send emails:

Hide from search engines

Under Hide from search engines, you can tell search engines to ignore the site:

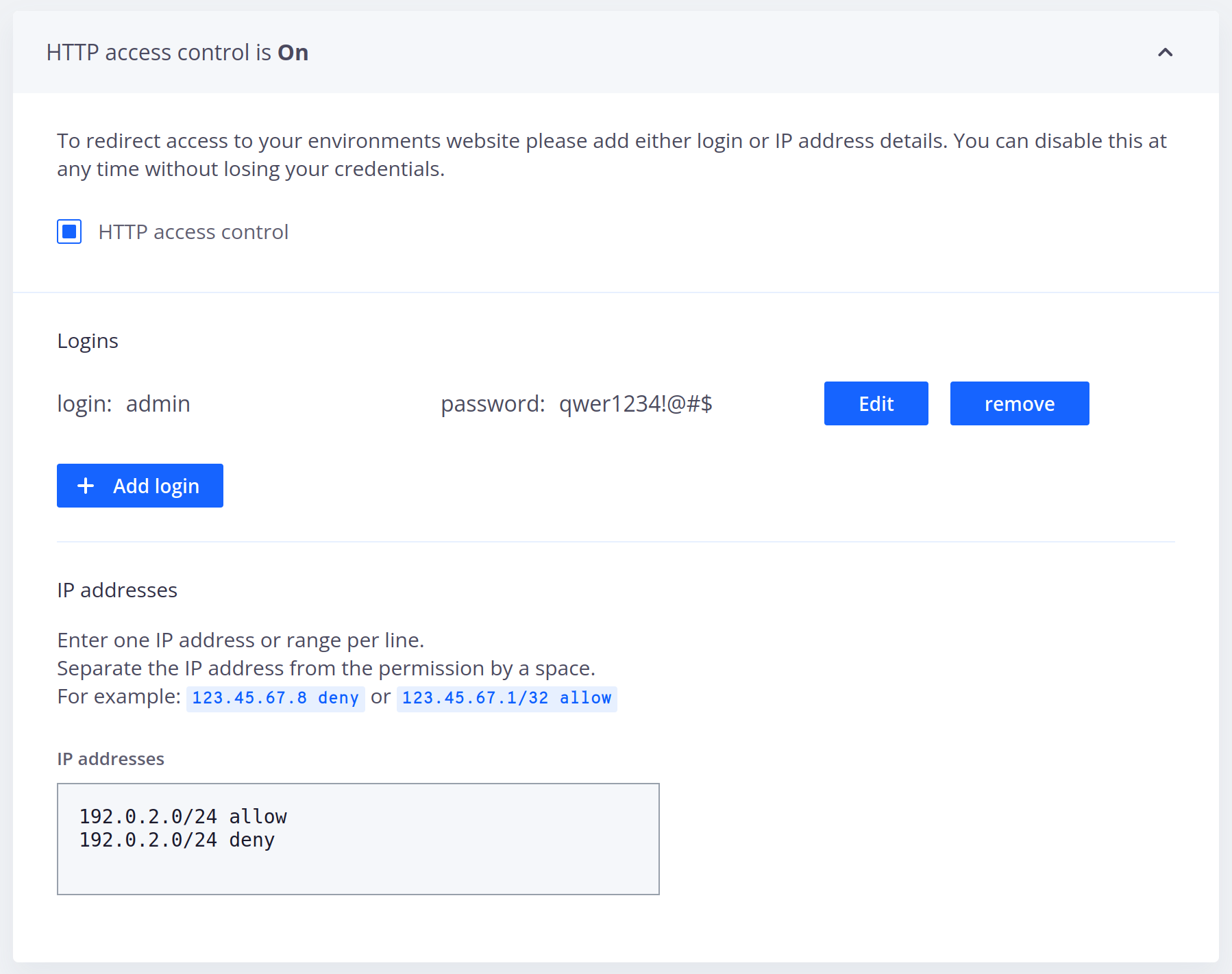

HTTP access control

Under HTTP access control, you can control access to your environment using HTTP methods:

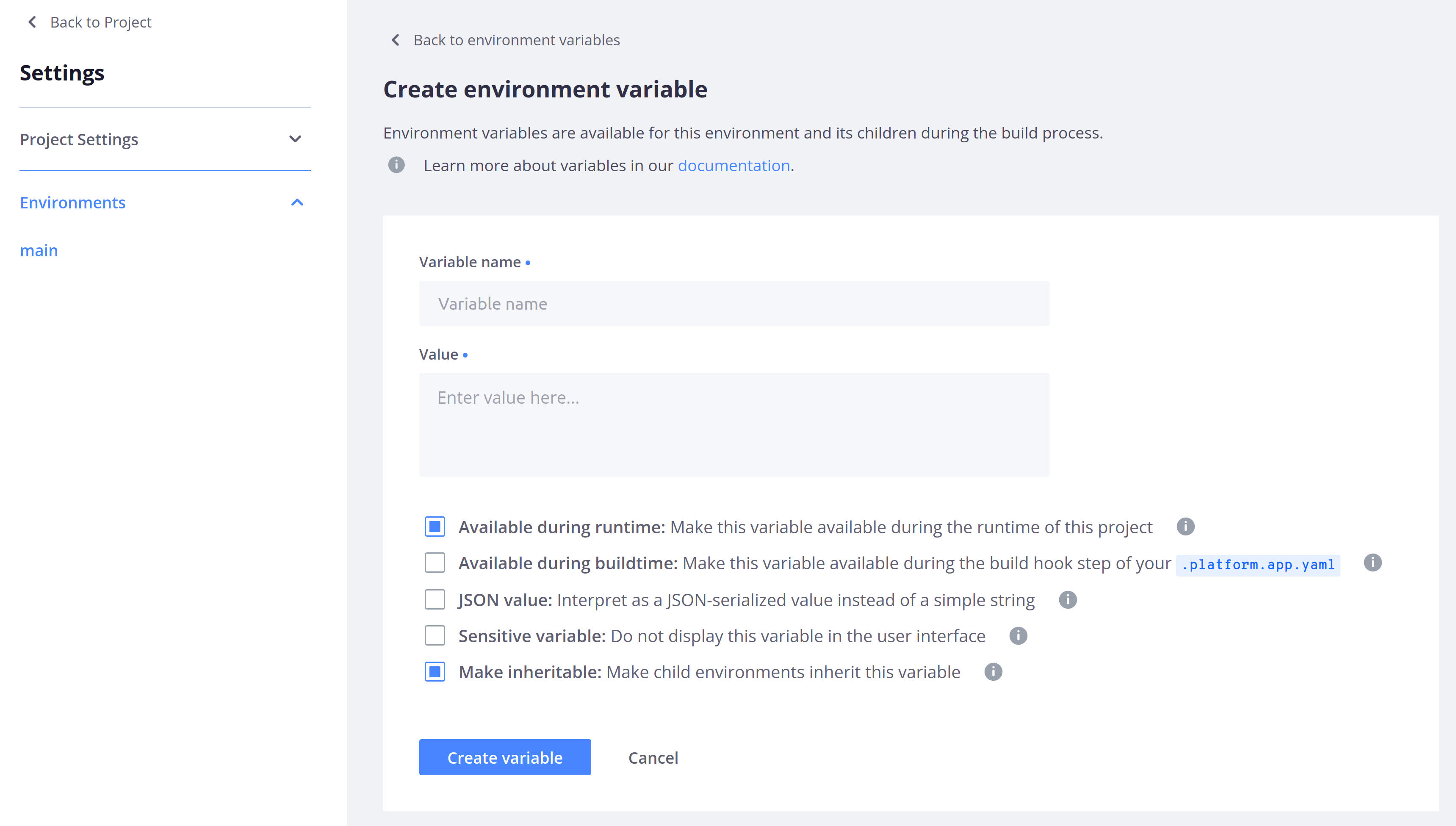

Variables

Under Variables, you can define environment variables:

Service information

For each environment, you can view information about how your routes, services, and apps are currently configured.

To do so, click Services. By default, you see configured routes.

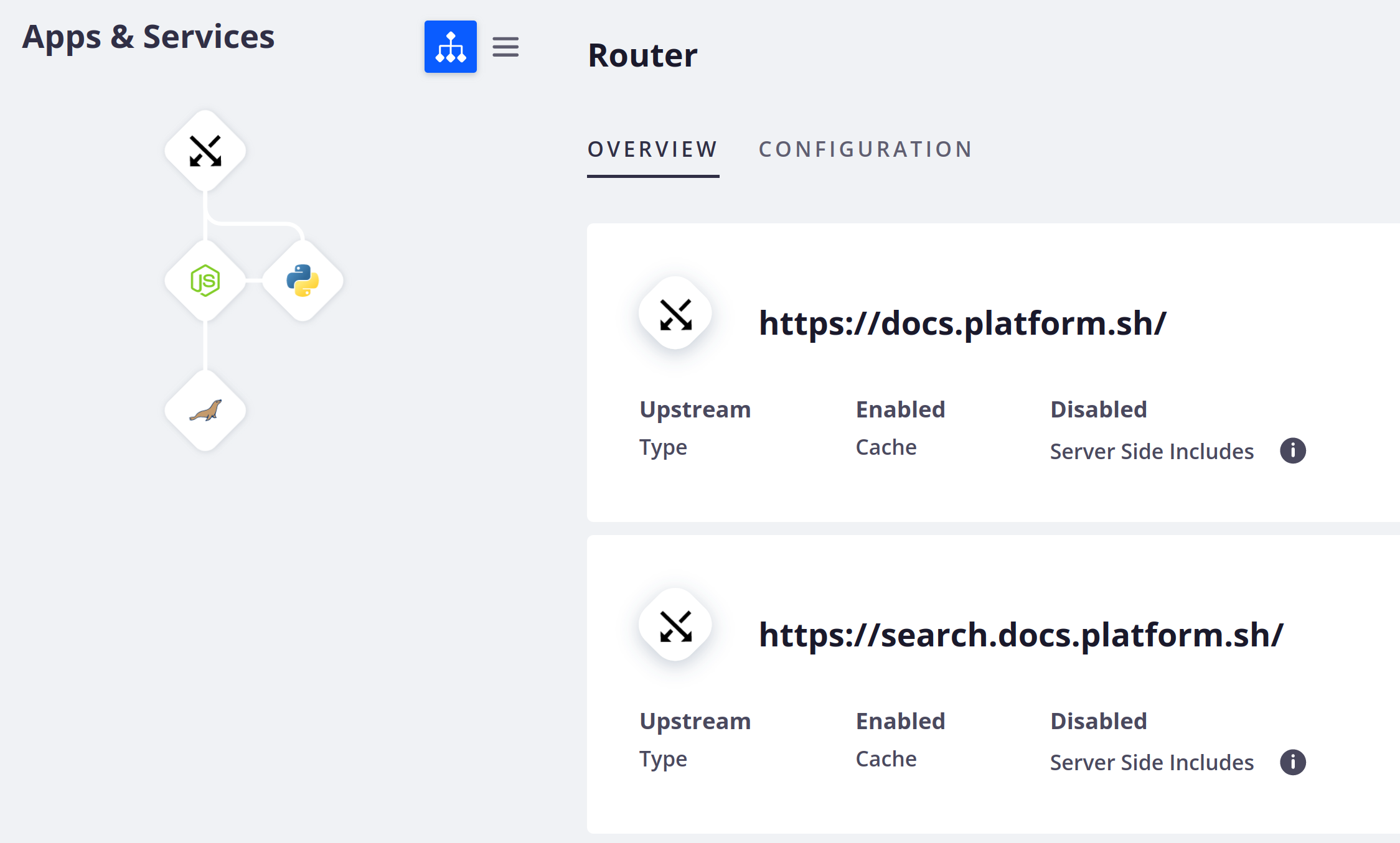

Routes

The Router section shows a list of all the routes configured on your environment. You can see each route’s type and check if caching and server side includes have been enabled for it:

To view the configuration file where your routes are set up, click Configuration.

Applications

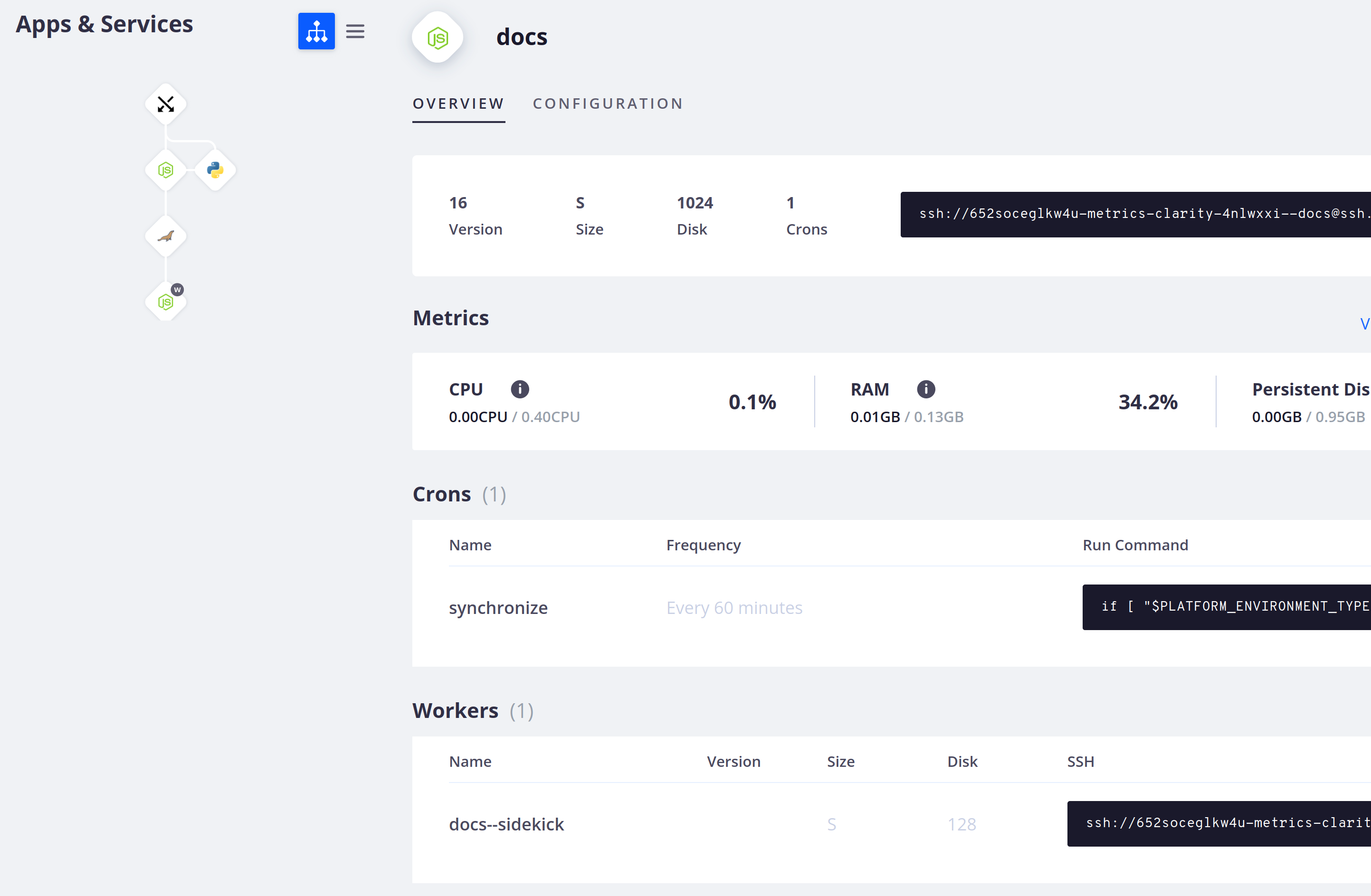

To see detailed information about an app container, select it in the tree or list on the left-hand side:

The Overview tab gives you information about your app. You can see:

- The language version, the container size, the amount of persistent disk, the number of cron jobs, and the command to SSH into the container.

- A summary of metrics for the environment.

- All cron jobs with their name, frequency, and command.

- All workers with their name, size, amount of persistent disk, and command to SSH into the container.

To view the configuration file where your app is set up, click Configuration.

Services

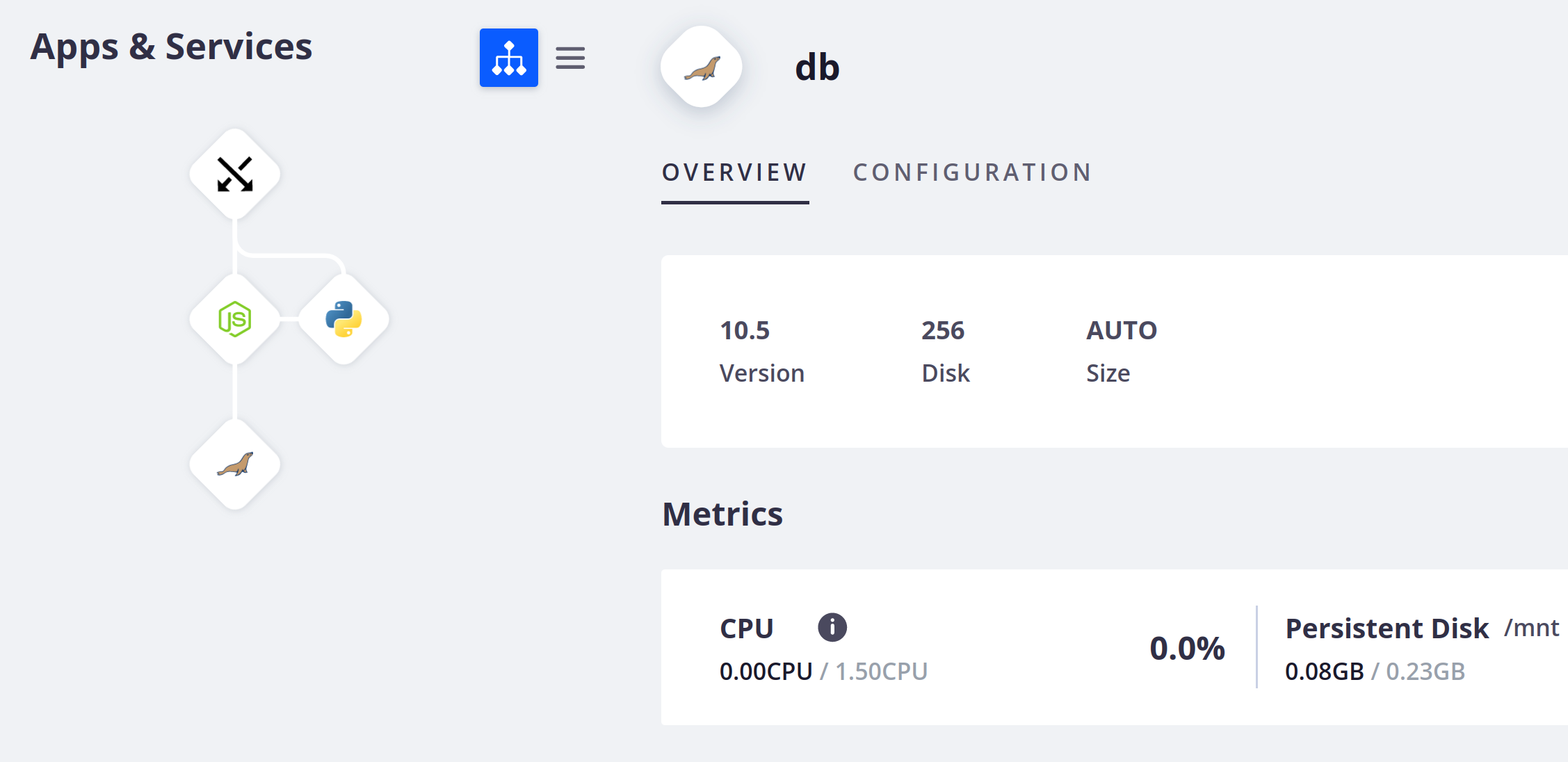

To see detailed information about a running service, select it in the tree or list on the left-hand side:

The Overview gives you information about the selected service. You can see the service version, the container size, and the disk size, if you’ve configured a persistent disk. You can also see a summary of metrics for the environment.

To view the configuration file where your services are set up, click Configuration.